I have shown that by introducing targeted drinking schedules for endurance athletes, the 1987 ACSM Position Stand on the Prevention of Thermal Injuries During Distance Running (1) essentially informed runners that they no longer could trust their own bodies to tell them when and how much they should drink during exercise.

Instead, they were subtly led to believe that the only advice they should trust is that which comes from the experts, the vast majority of whom were beginning to develop warm and cozy relationships with the companies producing and marketing sports drinks.

In addition, the industry was supporting research, the goal of which in my opinion, was to develop another human physiological fable, which I have termed the Zero-Percent Dehydration Doctrine.

Here is how I think it all came about.

The Third Gatorade-Funded Study by Drs. Scott Montain and Edward Coyle (1992)

In 1992, a study was published that specifically investigated the physiological effects of drinking fluid at different rates in high-quality cyclists exercising at high intensity in environmental conditions that would generally be considered unsafe for competitive sport (33 degrees Celsius with relative humidity of 50%)(2). In addition, as Saunders, et al., subsequently would show (3), the fans used to cool the athletes during exercise (convective cooling — the transfer of heat directly from the skin surface to the surrounding air without sweating) were hopelessly inadequate. This would have added an additional heat stress that the athletes would not have experienced had they been cycling out of doors and generating convective cooling in proportion to how fast they cycled.

The relevance of this failure is fully covered in my book Waterlogged (4, pp. 186-189) and need not detain us here. But in brief, the lack of adequate convective cooling in Montain and Coyle’s laboratory would have increased the rates at which the cyclists needed to sweat in order to maintain safe body temperatures. When extrapolated to athletes exercising out-of-doors, these results from experiments conducted indoors then would support guidelines promoting higher rates of fluid intake than athletes exercising out-of-doors actually would need.

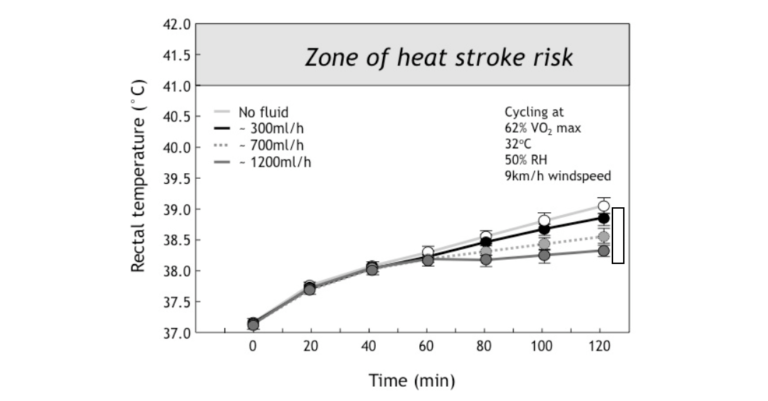

A recent study published 13 years after our original finding has essentially confirmed all our conclusions (5). For this study, a group of high-quality cyclists performed 2 hours of exercise at 62-67% of their maximum capacity (VO2max) drinking fluids at rates of either 0, 300, 700, or 1,200 mL/hr. The relevant findings are shown in Figures 1 and 2.

Figure 1: In the study of Montain and Coyle (2), high-quality cyclists exercised at high intensity for 2 hours in hot environmental conditions while drinking either nothing or fluids at three different rates (300, 700 or 1200 mL/hr). Final rectal temperatures were reduced in proportion to the rates of drinking. However, there was no difference in the rectal temperature responses in the first 60 minutes of exercise (Reproduced from source 4, p. 198).

Figure 1 shows that for the first 60 minutes of exercise in these demanding environmental conditions in which convective cooling was less than ideal, the subjects’ rectal temperatures rose equally, regardless of how little or much the subjects drank.

This already had been established in the first two Gatorade-funded studies discussed previously (6, 7) and therefore can be accepted as a fundamental biological law.

Of course, this conclusion is inconvenient for an industry that is trying to sell the health benefits of drinking fluids during all forms of exercise — regardless of intensity or duration — to all people. It is particularly inconvenient when trying to sell the need to drink excessively to prevent “heat illness” for the simple reason that the vast majority of recreational athletes exercise for less than an hour at a time. In direct contrast to the developing logic of the scientific advisers to the sports drink industry, which suggests that to prevent heat illness during exercise one needs to drink without restraint, these data establish the opposite: specifically, that the vast majority of exercisers can exercise safely even in hot conditions without the need to drink anything during exercise.

Naturally, this was not the message that the public would receive as a result of these scientific findings. Instead, they would receive an opposite message.

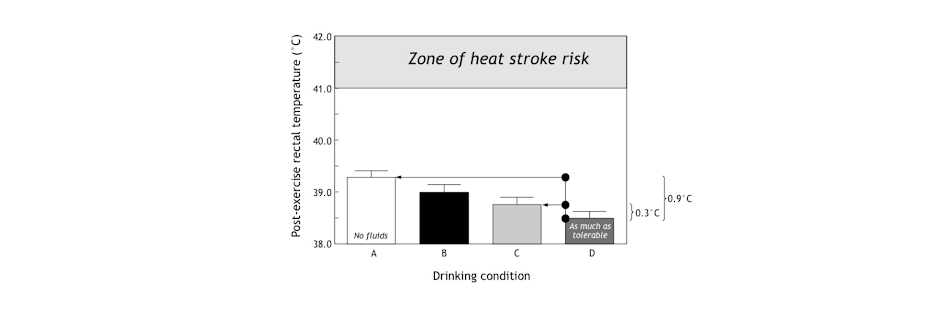

The second finding was that fluid ingestion lowered the post-exercise rectal temperatures in proportion to how much was drunk during exercise. But the effect was hardly dramatic (Figure 2). Drinking at a rate of 1,200 mL/hr versus not drinking lowered the average final rectal temperature by only 0.9 degrees Celsius; the difference between drinking 700 and 1,200 mL/hr was only 0.3 degrees Celsius, a biologically insignificant outcome (Figure 2).

Figure 2: This figure shows the final rectal temperatures were reduced in proportion to the amount of fluid ingested during exercise. But whereas the difference between not drinking anything and drinking 1,200 mL/hr was 0.9 degrees Celsius, the difference between drinking at 700 mL/hr (which approximates ad libitum, i.e., freely chosen drinking rates (8)) was only 0.3 degrees Celsius, which is biologically insignificant. Note, also, that in none of these conditions did the final rectal temperatures approach those measured in athletes with heat stroke (Reproduced from source 4, p. 198).

The third finding is the one that was not reported and which therefore tells us something about the original intent of the study.

The researchers found that despite not drinking while exercising very vigorously in these very demanding environmental conditions, no athlete developed a “heat illness,” even after 2 hours of challenging exercise. What is more, the finishing rectal temperatures in all athletes, even when they did not drink, were far below anything that remotely could be called dangerous since none approached values of >41.0 degrees Celsius, which are found in persons with heatstroke.

The fourth finding was that sweat rates were the same regardless of how much athletes drank during exercise. Rates of urine production would have been higher in those drinking more during exercise, but this was not studied. Thus, the higher body temperatures in those who were drinking less or not at all during exercise could not have been because they were losing less heat through sweating.

This destroys another myth that scientists close to the industry have tried to promote over the years: that “dangerous dehydration” causes heatstroke by reducing the ability to sweat (which, according to their illogic, would be cured by drinking copiously during exercise).

Instead, as I have described before, the higher body temperatures in the cyclists when they did not drink represents a well-described mammalian response, best shown by the African antelope, the oryx (9), which is able to live in deserts at temperatures in excess of 45 degrees Celsius without drinking fluids or needing to sweat. It does this by allowing its body temperature to rise when it needs to lose extra body heat but has limited access to water. This phenomenon, known as adaptive heterothermy (10), was also discussed in a previous column. Unfortunately, this phenomenon apparently was unknown to these exercise physiologists who have never pondered the mystery of the oryx. The oryx’s higher body temperature increases heat loss without wasting precious water as sweat—exactly as happened in the athletes in this study who did not drink any fluids during exercise.

Perhaps the true message of this study should have been that it is perfectly safe for well-trained athletes to exercise very vigorously for up to 2 hours in very hot environmental conditions without drinking anything.

Instead, the results of this study were used to promote the idea that all athletes must drink as much as they can possibly tolerate during all forms of exercise, regardless of the prevailing environmental conditions or any other factors.

This would form the basis for the American College of Sports Medicine (ACSM) to promote drinking at rates of 1,200 mL/hr during all forms of exercise to ensure that dehydration does not develop in anyone during any form of exercise — i.e., the Zero-Percent Dehydration Doctrine.

Was the Montain and Coyle study designed specifically to produce very high sweat rates? If so, it would promote rates of fluid ingestion during exercise that are unrealistic and irrelevant for almost all exercisers, especially (as we will see) for those running slowly in cool to cold environmental conditions.

The sweat rate achieved by the athletes in Montain and Coyle’s study was 1,200 mL/hr. As a result, to prevent any weight loss during exercise, subjects were required to drink at exactly the same (very high) rates.

But how and why did the authors choose to design a study that would produce such high sweat rates and hence the need to drink, also at such high rates? The answer to the “how” question is quite simple, but only the authors and the funders of this research know why they undertook the study.

To begin with the “how”: The study included high-quality athletes who could maintain high metabolic rates (VO2) by exercising vigorously for prolonged periods (2 hours) in hot environmental conditions in which their capacity for convective cooling was limited. Inadequate convective cooling would have increased the need to lose more heat through increased sweating.

Since the metabolic rate (VO2, which is a function of the exercise intensity) determines the extent to which the body temperature will increase during exercise and hence the sweat rate (which increases in proportion to the rise in rectal temperature), these studies were clearly designed to produce high rates of sweating during exercise. High-quality athletes were studied since only superior athletes can sustain high metabolic rates for prolonged periods without fatiguing. Had the athletes been unable to sustain such high metabolic rates for 2 hours, their sweat rates would have been correspondingly lower.

In addition, the study was performed in environmental conditions that would be rated borderline “high risk” by the ACSM guidelines for safe exercise in the heat. These environmental conditions increased sweat rates.

It makes no sense, however, to extrapolate those high rates of sweating and hence the high rates of fluid ingestion necessary to prevent any weight loss during exercise to slower runners running in cooler conditions.

The point is that any experimental study can be designed to favor a particular outcome, and this study favored the finding that (all) athletes must drink at very high rates during exercise.

But if researchers wished to produce guidelines for average athletes completing 26-mile marathons in 4 hours and 30 minutes or slower in mild to cool conditions, then they should have studied athletes running/walking at 5.8 mph (10 minutes and 16 seconds per mile) in the appropriate environmental conditions, not elite cyclists working as hard as they could for 2 hours in environmental conditions that few would consider appropriate for racing a 26-mile marathon.

In contrast, if Montain and Coyle’s study had been performed on recreational marathon runners taking between 4 and 6 hours to complete marathons, the finding would have been that a rate of fluid intake of ~500 mL/hr or less—the typical ad libitum drinking rates (8) that we have been advocating since 1988—would have been more than sufficient to fulfill the Zero-Percent Dehydration Doctrine. In which case, the drinking guidelines subsequently produced by the ACSM in 1996 would have been quite different.

And, as I will argue, the subsequent epidemic of exercise-associated hyponatremia (EAH) need never have happened.

If these guidelines had been drawn up exclusively for elite athletes, they would have been safe, since elite runners soon would have discovered that such high rates of fluid ingestion are simply not sustainable, especially during competitive running. Any athlete trying to drink at a rate of 1,200 mL/hr when running at 11 or more mph soon would be unable to sustain that pace because of the development of uncomfortable gastrointestinal symptoms (11), as occurred in the original study of Dr. David Costill and colleagues (6).

But slow runners with maximum sweat rates of only 500 mL/hr when running a marathon in 4 hours and 30 minutes (12) would be more likely to sustain such high rates of fluid ingestion relatively comfortably, especially if they had the capacity to absorb fluid rapidly across the intestine when running at that pace.

And provided they did not have the Syndrome of Inappropriate Anti-Diuretic Hormone Secretion (SIADH) (which prevents urine production, causing EAH and EAHE in those who overdrink), they soon would be passing perhaps as much as 700 mL/hr of urine. Surely, most attempting this would begin (eventually) to question whether it was really necessary to drink so much if they were simply passing it out as urine. Recall that in the study of Susan Barr et al. (13), subjects had to pass in excess of 2 liters of fluid during 6 hours of exercise, even when their aim was just to maintain their body weight during exercise and not to drink as much as “tolerable.”

Thus, the logical error made by the authors of the 1996 ACSM Position Stands is strikingly obvious. The studies they cited were designed to measure the maximum rates of sweat loss and fluid ingestion that better-than-average athletes could sustain during prolonged exercise lasting about 2 hours in conditions that were hot enough to be categorized even by the ACSM as either high or very high risk (for heat illness). Drinking guidelines based on those studies then were extrapolated to recreational runners and cyclists who run or cycle at metabolic rates that are two to four times lower than these better athletes.

But because recreational athletes exercise at much lower metabolic rates, their real fluid requirements also will be two to four times less than those of the subjects tested in Montain and Coyle’s study. What is more, because recreational athletes exercise at much lower exercise intensities, their much slower breathing rates allow only these slow running athletes to ingest fluid at such high rates.

Their final error was to presume that the drinking rate (1,200 mL/hr) that produced the least increase in body temperature was the healthiest. Since this drinking rate was designed to prevent any weight loss during exercise, we see the birth of the Zero-Percent Dehydration Doctrine.

Which could have been the real goal of the study.

Using Weight Loss During Exercise to Classify a Novel Disease of Exercise: “Dangerous” Dehydration

I came to CrossFit and my involvement in this website at least in part because of my dissatisfaction with the direction that my profession has taken since I graduated as a medical doctor in December 1974. I fear the goal of the profession seems to have become a desire to make everyone a paying customer for life. This is not why I, perhaps rather naïvely, entered the profession.

One way to maintain paying customers is to warn everyone of serious, near-universal diseases which necessitate the prescription of lifelong medications, regardless of whether or not the existence of these diseases is supported or confirmed by adequate scientific research. The most obvious of these spurious diseases is “high cholesterol.”

When I entered medicine, I was taught that for a disease to exist, there must be some diagnostic measurement that clearly distinguishes persons with that disease from those without it. The obvious example is Type 2 diabetes mellitus (T2DM). Doctors diagnose T2DM when the blood-glucose (and/or insulin) levels rise well ABOVE those measured in otherwise healthy people. The point is that there is no overlap between the blood glucose and insulin levels so measured in those with and without T2DM. The distinction between the two groups — one healthy and one diseased — is absolutely clear for all to see.

But with “cholesterol,” this is not the case. Blood cholesterol concentrations in those who will and those who will not develop heart complications in the future overlap almost completely. So how does one make a disease appear when the supposedly diagnostic test cannot distinguish between those who are and those who are not at risk?

The answer is that you make any blood cholesterol concentration (above zero) diagnostic of the (fake) disease. In other words, the only safe blood cholesterol concentration is one of zero mmol/L; all other values indicate that you have the disease of “high cholesterol.” In this way, everyone suffers from “high cholesterol” so that all the world’s people need to be placed on drugs that lower their blood cholesterol concentrations to zero or get them as close as possible.

This is the way the “science” of cholesterol has moved over the past 20 years. Every few years the cholesterol experts, all of whom have very cozy relationships with the manufacturers of drugs designed to lower “dangerously” high blood cholesterol concentrations, produce new guidelines. Each new reiteration advises us that we must all achieve even lower blood cholesterol concentrations. Until the day we reach industry’s ultimate target: blood cholesterol concentrations of zero in all living humans. (Of course, we all will be dead long before we reach blood cholesterol concentrations of zero.)

It is my argument that exactly the same has happened with fluids and exercise. The actions of the scientists advising the ACSM and related organizations, including NATA, suggest an effort to convince the general population that unless we drink enough to replace all the fluid lost during exercise, we immediately risk dying from “dehydration-induced” heatstroke.

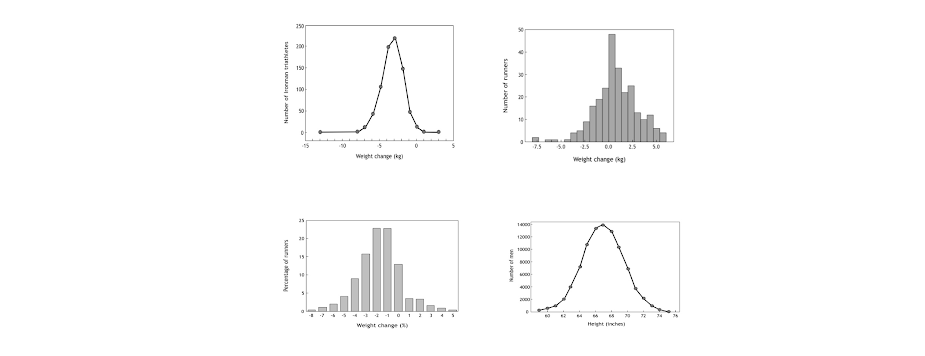

But when we actually measure the extent of body-weight loss in runners after prolonged exercise, we find that it follows what is called a normal distribution (Figure 3: top left and right panels; bottom left panel), similar to that found for height in British soldiers (Figure 3: bottom right panel). There is no distinction between two populations of runners — one healthy and the other diseased — on the basis of how much weight they lose during running races.

Figure 3: The distribution of body weight changes (kg) during the 2000 and 2001 South African Ironman Triathlons (14) (top left panel), the 2008 Hong Kong Marathon (15) (top right panel), and the 2009 Mont Saint-Michel Marathon (France) (16) (bottom left panel) is normally distributed, indicating that there is no single cutoff value that delineates those with from those without the disease of “dehydration.” This indicates that a specific disease of “dehydration” does not exist, at least not in the competitors in these three athletic events. Note, also, that many more athletes in the South African Ironman Triathlons and Mont Saint-Michel Marathon lose substantial amounts of body weight (>7% kg or >4% body weight) than either maintain or increase their body weights during exercise. In the bottom right panel, the heights of 91,163 British Army recruits studied in 1949 (17) also are normally distributed, indicating that there also is no single height in this population of soldiers that identifies those with a “disease” of low or tall height, just as there is no level of exercise-induced dehydration identifying the presence of “disease” in the athletes studied. There are diseases that cause both very low (dwarfism) or very tall heights (acromegaly), but their presence generally excludes entry into the military so that those heights do not appear in this distribution.

This normal distribution is perhaps surprising since, whereas height is strongly genetically determined and perhaps 70% of the variation in height can be explained by genetic variance, few would presently argue that 70% of the variation in weight loss during exercise is regulated mainly by genetic factors.

But this is not the key point. The key point is that this normal distribution shows there is not a cutoff value for weight loss beyond which it is possible to conclude that athletes losing more weight than this cutoff value must be “dangerously dehydrated” and therefore at high risk of death.

That is to say, drinking during exercise can never be a “one-size-fits-all” prescription.

Instead, we are all different and will finish running races with different body-weight losses, but our differences will be normally distributed. We are, as ever, an “experiment of one” and the ACSM dictates are no more likely to be appropriate for each of us than they are likely to be wrong.

So, when experts draw up guidelines for drinking during exercise, they should perhaps appreciate this individual variability in human responses to the same stress (of running a competitive race).

But the problem faced by anyone who wishes to make “dehydration” a disease that can be prevented only by drinking appropriately during exercise is that some distinct cutoff level of weight loss must be chosen. But the three diagrams in Figure 3 show that any level that is chosen will be entirely arbitrary.

Now let’s return to the commercial reality.

The profitability of companies producing cholesterol-lowering drugs or drugs to treat hypertension is critically dependent on the cutoff value used to identify “disease.” Similarly, the future income of the sports drink industry will be materially influenced by the decision regarding which precise level of “dehydration” is dangerous.

The key to financial success in all three examples is to make the cutoff values as low as possible so the largest possible proportion of the population is included.

So anyone with commercial intent wishing to influence those responsible for drawing up future guidelines for exercisers would have asked the intriguing question: What if we reduce the “safe” cutoff level of “dehydration” during exercise to the lowest possible value? Which cutoff value of “dehydration” would be the most advantageous for the industry?

For example, if a value of 3% is chosen (as might be logical according to the arguments of Wyndham and Strydom, and Ladell’s concept of a free circulating water reserve of ~2 liters), this would require that a 50-kilogram female athlete could lose 1.5 kilograms of sweat before she would be advised to drink. This probably would require about 90 minutes of exercise if she was exercising vigorously at a sweat rate of 1.0 L/hr or 3 hours if she was sweating at a rate of 0.5 L/hr as is typical for female athletes taking 5 or more hours to complete a 26-mile marathon (12). Likewise, an 80-kilogram male athlete would need to lose 2.4 kilograms of sweat; this likely would take at least 120 minutes of exercise when exercising sufficiently vigorously to sustain a sweat rate of 1.2 L/hr.

Thus, the adoption of a cutoff value of 3% would mean that athletes would need to begin drinking only after they had already exercised for more than a minimum of 90 to 120 minutes. The athletes who most often do that much exercise on a regular basis are those in the military or those who run, cycle, and swim regularly in competitive running, cycling, and triathlon races. The numbers of such athletes globally is probably closer to millions than to hundreds of millions.

Even a cutoff value of 1% would not produce a great financial return to the industry as the vast majority of exercisers who spend, for example, 30 to 40 minutes exercising intermittently in the gym or jogging gently likely will not lose more than about 600–800 milliliters (600-800 grams) of sweat. For athletes of 60 to 90 kilograms, this represents less than a 1% weight loss.

Thus, the consequence of any guidelines that promote a cutoff dehydration level of 1% or greater as “dangerous” will eliminate all but the more serious athletes who exercise continuously for more than 90 to 120 minutes from the need to drink during exercise. As a result, the vast majority of exercisers, perhaps 90%, would be advised that they do not ever need to drink during their regular bouts of exercise.

Could there possibly be a worse nightmare for the top executives of a company producing sports drinks than to receive this message from their advertising departments?

But if the cutoff level identifying “dangerous dehydration” can be reduced to a 0% body-weight loss, then every single exerciser will need to begin drinking from the moment she begins her exercise.

Immediately, the global market for sports drinks becomes hundreds of millions of exercisers who can be advised that they must drink voraciously whenever they exercise—not only on those occasions when they wish to exercise for 90 to 120 minutes.

This simple analysis explains, in my view, why by 1996 the sports drink industry would have been very keen that the cutoff value for “dangerous dehydration” should be set at 0%; what I call the Zero-Percent Dehydration Doctrine. Perhaps they subtly informed the scientists with whom they were then cultivating cozy relationships.

Regardless, the two 1996 ACSM Position Stands on Heat and Cold Illnesses During Distance Running and on Exercise and Fluid Replacement very conveniently delivered exactly what would be of greatest commercial benefit to the sports drink industry.

The 1996 ACSM Position Stands Effectively Formalized the Zero-Percent Dehydration Doctrine

The 1996 ACSM Position Stand on Heat and Cold Illnesses During Distance Running (18) and its companion paper, the Position Stand on Exercise and Fluid Replacement (19), repeat many of the errors that were present in the 1987 Position Stand, discussed in a previous column. And then to compound the problem, they added a few new, even grosser errors.

The Position Stand on Heat and Cold Illnesses (18) repeated the false claim that dehydration predisposes to “hyperthermia, heat exhaustion, dangerous hyperthermia or exertional heatstroke.” In part, the error was made because the laboratory scientists who drew up these stands have no medical training, and therefore no idea how to diagnose or manage these conditions.

The truth is that there are only two forms of heat illness that need to be distinguished: exercise-associated postural hypotension (sometimes called post-exercise syncopy) (20-22) and heatstroke (23). And neither illness has anything to do with fluid ingestion or its lack during exercise. So their treatment is not primarily fluid replacement (24-25).

The Position Stand also reiterates the dogma that “adequate fluid consumption before and during the race can reduce the risk of heat illness, including disorientation and irrational behaviour especially in longer events such as the marathon.” No new evidence not included in the 1987 Position Stand is presented to support this false statement.

The 1996 Position Stand on Exercise and Fluid Replacement repeats this error: “It is therefore reasonable to surmise that fluid replacement that offsets dehydration and excessive elevation in body heat during exercise may be instrumental in reducing the risk of thermal injury.” No such evidence exists.

The Position Stand also stated: “During exercise, athletes should start drinking early and at regular intervals in an attempt to consume fluids at a rate sufficient to replace all the water lost through sweating [in other words, the Zero-Percent Dehydration Doctrine] or consume the maximal amount that can be tolerated (my emphasis).” The proposed drinking rate was 600-1,200 mL/hr, well in excess of the rates of fluid ingestion we had measured in athletes drinking ad libitum during running and other races (8). And well in excess of sweat rates.

As we will show, female marathon runners in the U.S. would develop fatal EAHE when they followed this advice to the letter.

This Position Stand also made doctrine of the false concept that “the perception of thirst (is) an imperfect index of the magnitude of the fluid deficit (and) cannot be used to provide complete restoration of water lost by sweating.”

Once more the myth is created that mammalian biology, hard won over tens of millions of years of successful evolution, is at fault. And we can only exercise safely if we follow the advice of the self-proclaimed “experts” who advise us to “drink as much as tolerable during exercise” to prevent heat illness, even though there was, and still is, zero evidence to support their conviction.

The Science of Hydration had finally arrived, fully adorned, ready to be adopted around the world.

And based on nothing more than the beliefs of a tiny group of laboratory-based scientists with little exposure to the practical world of marathon running.

Conclusion

Had the point been made that the 1996 ACSM drinking guidelines were drawn up exclusively for elite athletes exercising in very hot environmental conditions for less than 2 to 3 hours, they would have been safe — particularly since elite runners soon would have discovered that such high rates of fluid ingestion are simply not sustainable, especially during competitive running.

And their practical experience would have taught these performance-orientated athletes that they should listen to their own bodies rather than the opinions of the “experts” in the Science of Hydration.

But unfortunately, recreational athletes, especially female marathon runners in the U.S. and those involved in ultradistance endurance events such as Ironman triathlons, made the error of believing the “experts.”

Some, as we shall see shortly, did so with dire consequences.