Clinical behavior and journal editorial decisions are often based on research abstracts alone (1). Consequently, any deviations between the content of these abstracts and the actual data — here referred to as “spin” — can distort the impact of the scientific literature. The authors of this 2019 paper assessed the frequency and nature of spin in the titles and abstracts associated with psychiatry research as one form of such distortion.

The authors define spin as “the use of specific reporting strategies, from whatever motive, to highlight that the experimental treatment is beneficial, despite a statistically nonsignificant difference for the primary outcome, or to distract the reader from statistically nonsignificant results.”

In other words, spin refers to situations in which a neutral or negative finding is presented as positive.

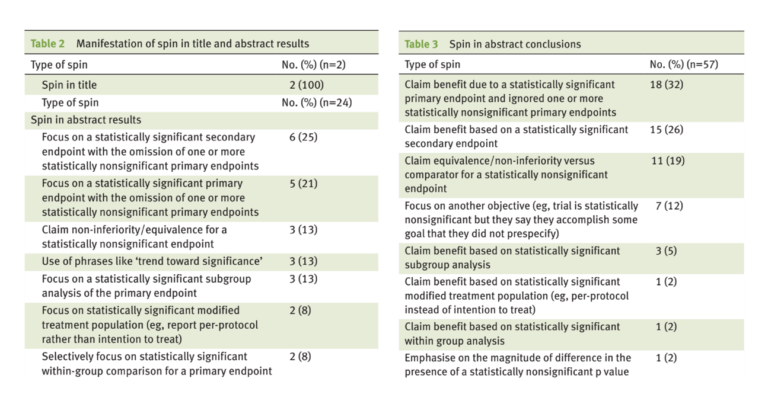

The authors surveyed 116 randomized controlled trials published between 2012 and 2017 across seven major psychiatric journals. Tables 2 and 3 summarize the spin found in this analysis. Some form of spin was present in 56% of papers surveyed.

Common forms of spin included:

- Presenting the results as uniformly positive when some but not all primary outcomes were positive.

- Presenting results as positive based on secondary or non-quantitative outcomes.

- Presenting results as positive based on within-subject comparisons or subgroup analyses.

- Presenting statistically insignificant results as positive.

Notably, there was no difference in the frequency of spin between industry- and publicly funded research.

Previous trials have had similar findings. Two reviews found more than 80% of surveyed trials had evidence of spin (2), while a third found 23% of surveyed trials had abstracts that outright disagreed with the paper’s own conclusions (3).

These results suggest spin is pervasive and cannot be solely predicted by factors such as funding source, study design, or publishing journal. For the scientific community, this indicates stronger oversight is necessary to ensure published abstracts accurately reflect the content of each paper. For readers, it illustrates the risks of reading just the abstract — there is a more than 50% chance the abstract, at least partially, fails to reflect the actual study results.

Notes

- See “Journal Reading Habits of Internists,” which reports, “[Internists] reported reading only the abstract for 63% of the articles”; “Screening Research Papers by Reading Abstracts,” which reports, “For about two thirds of submissions seen during the study, editors were able to make decisions based on reading only abstracts.”; and “Physicians Reading and Writing Practices: A Cross-Sectional Study From Civil Hospital.”

- “Classification and Prevalence of Spin in Abstracts of Non-Randomized Studies Evaluating an Intervention”; “Spin Is Common in Studies Assessing Robotic Colorectal Surgery: An Assessment of Reporting and Interpretation of Study Results.”

- “Misleading Abstract Conclusions in Randomized Controlled Trials in Rheumatology: Comparison of the Abstract Conclusions and the Results Section”